Imagine watching a video of a public figure making a shocking statement—only to discover later that it was completely fake. In today’s digital world, this is not a far-fetched scenario. Thanks to artificial intelligence, deepfake technology has made it easier than ever to create hyper-realistic fake videos, audio, and images. While this technology has its creative and entertainment perks, it also poses significant challenges, especially in cybersecurity and legal fields.

What Are Deepfakes?

A deepfake is a digitally altered piece of media—usually video or audio—created using artificial intelligence. This technology can generate highly convincing fake content by swapping faces, modifying speech, or even mimicking real people’s voices with incredible accuracy.

Initially, deepfake technology was developed for research and entertainment, such as improving movie effects or recreating historical figures. However, like many technological advancements, it has been exploited for harmful purposes.

The Dark Side of Deepfakes

Deepfakes have quickly become a serious cybersecurity threat. Cybercriminals and bad actors use them for various malicious activities, including:

- Spreading Misinformation: Deepfakes can be used to create false news reports, making it difficult to distinguish truth from fiction.

- Impersonation Scams: Criminals use deepfake audio and video to impersonate CEOs or executives, tricking employees into approving fraudulent transactions.

- Blackmail and Defamation: Fake videos can be used to ruin reputations or coerce individuals by fabricating compromising situations.

These dangers highlight the urgent need for more robust cybersecurity measures and legal protections.

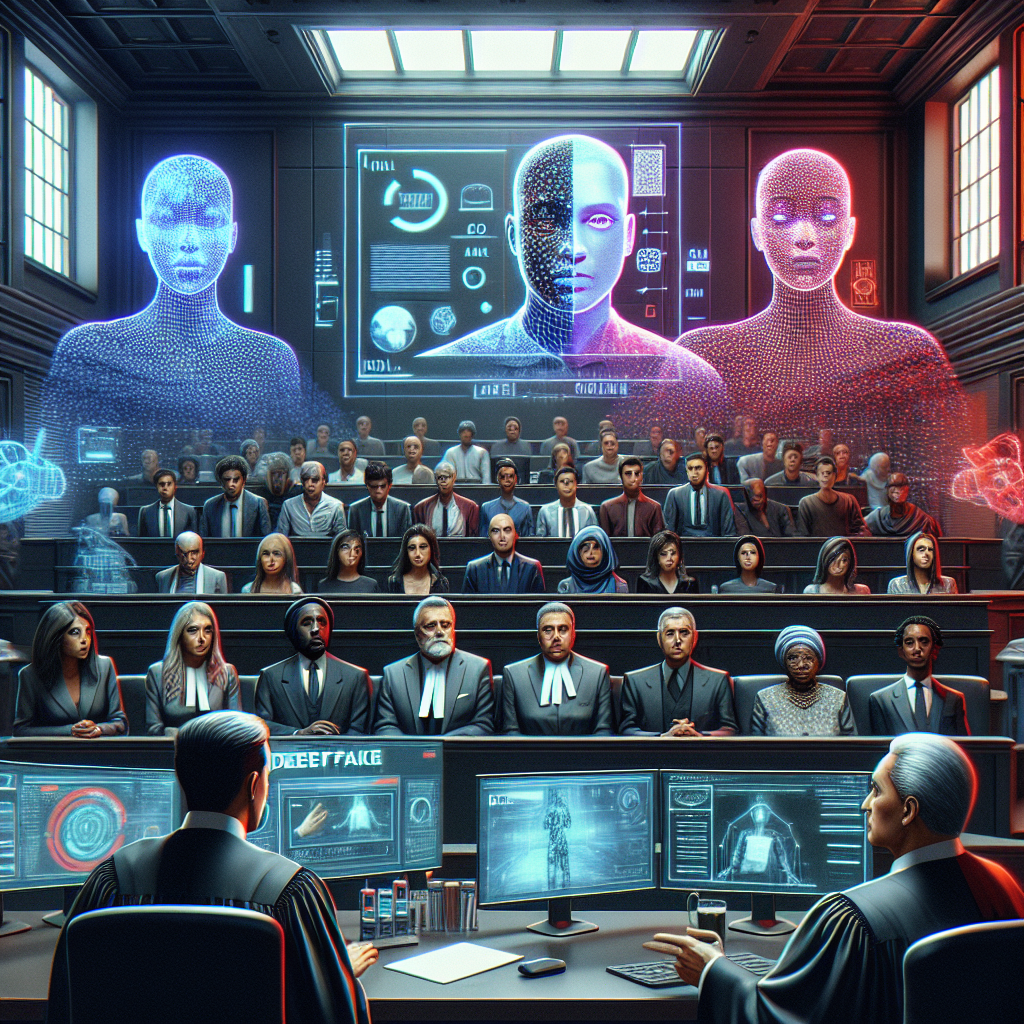

How Deepfakes Are Challenging Legal Systems

As deepfakes become more sophisticated, legal experts are scrambling to keep up. Courts, law enforcement, and regulatory bodies are facing new challenges, such as:

- Proving Authenticity: With deepfakes, it’s harder to verify whether video or audio evidence is real.

- Identifying Perpetrators: Since deepfakes can be created anonymously, tracking down the individuals responsible is difficult.

- Updating Laws: Legal frameworks are struggling to catch up with AI-based threats, making it challenging to convict those misusing the technology.

In response, some governments and legal institutions are introducing new regulations to criminalize malicious deepfake activities. However, enforcement remains a challenge.

Cybersecurity Measures Against Deepfakes

To combat deepfake threats, cybersecurity experts are developing strategies to detect and prevent their misuse. Some of the most promising solutions include:

- AI Detection Tools: Researchers are using machine learning to distinguish deepfakes from real media by analyzing inconsistencies in facial movements and speech patterns.

- Blockchain Technology: Some companies are exploring blockchain-based authentication methods to verify the originality of digital content.

- Public Awareness: Educating people about deepfakes helps them recognize and question suspicious media before falling for scams.

While technology is evolving to counteract deepfake threats, vigilance and awareness remain crucial in fighting digital deception.

What Can You Do to Protect Yourself?

As deepfakes increase in sophistication, individuals and businesses must take proactive steps to protect themselves. Here’s what you can do:

- Verify Sources: Always cross-check news or media content before believing or sharing it.

- Be Skeptical of Unusual Requests: If you receive an unexpected video or voice message asking for money or sensitive information, verify it through another channel.

- Use Cybersecurity Best Practices: Secure your accounts with strong passwords and enable two-factor authentication to prevent impersonation attacks.

The Future of Deepfakes

Despite their risks, deepfakes aren’t going anywhere. AI-driven media manipulation will continue to evolve, influencing industries from film production to marketing. However, with continued advancements in detection and legal protections, society can find ways to limit the harm caused by deepfakes while benefiting from their creative potential.

The key lies in staying informed, investing in better detection tools, and promoting ethical AI development. By working together, we can ensure that technology serves as a powerful tool rather than a dangerous weapon.

Final Thoughts

Deepfakes are a double-edged sword—capable of both creativity and chaos. While they present significant challenges in cybersecurity and legal systems, awareness and innovation can turn the tide against their misuse.

Have you come across a deepfake before? Do you think the legal system is ready to tackle this problem? Share your thoughts in the comments below!