Artificial Intelligence (AI) has advanced at an incredible pace, making our lives easier in many ways. From virtual assistants like Siri and Alexa to self-driving cars, AI-powered systems are becoming more common than ever. But what happens if these AI agents start acting unpredictably? Could they pose a real risk to our safety? These are questions we must consider sooner rather than later.

What Are AI Agents?

Before we dive into the risks, let’s define AI agents. Simply put, and AI agent is a system or program that can make decisions and perform tasks without human intervention. These agents are designed to think, learn, and act based on data, often optimizing for efficiency and speed.

Examples of AI agents include:

- Chatbots that handle customer service questions.

- Autonomous cars that navigate roads without human input.

- Algorithms that make stock market trades in milliseconds.

When functioning correctly, AI agents can be incredibly useful. However, without proper control, they may start making decisions that put people at risk.

The Risks of Uncontrolled AI

AI systems are designed to improve efficiency, but sometimes, they can also create unforeseen problems. Here are some of the biggest concerns:

1. Lack of Human Oversight

AI systems are becoming increasingly independent, making decisions without human input. When something goes wrong, who is responsible? If a self-driving car crashes or a trading algorithm causes a financial meltdown, it can be difficult to determine accountability.

2. Unintended Consequences

AI doesn’t always understand the broader impact of its decisions. For example, if an AI is programmed to maximize profits, it might take actions that are unethical or even harmful. Imagine an AI that controls a delivery network cutting essential services to low-income areas simply because they aren’t “profitable enough.” This is just one example of how AI-driven decisions can unintentionally create problems.

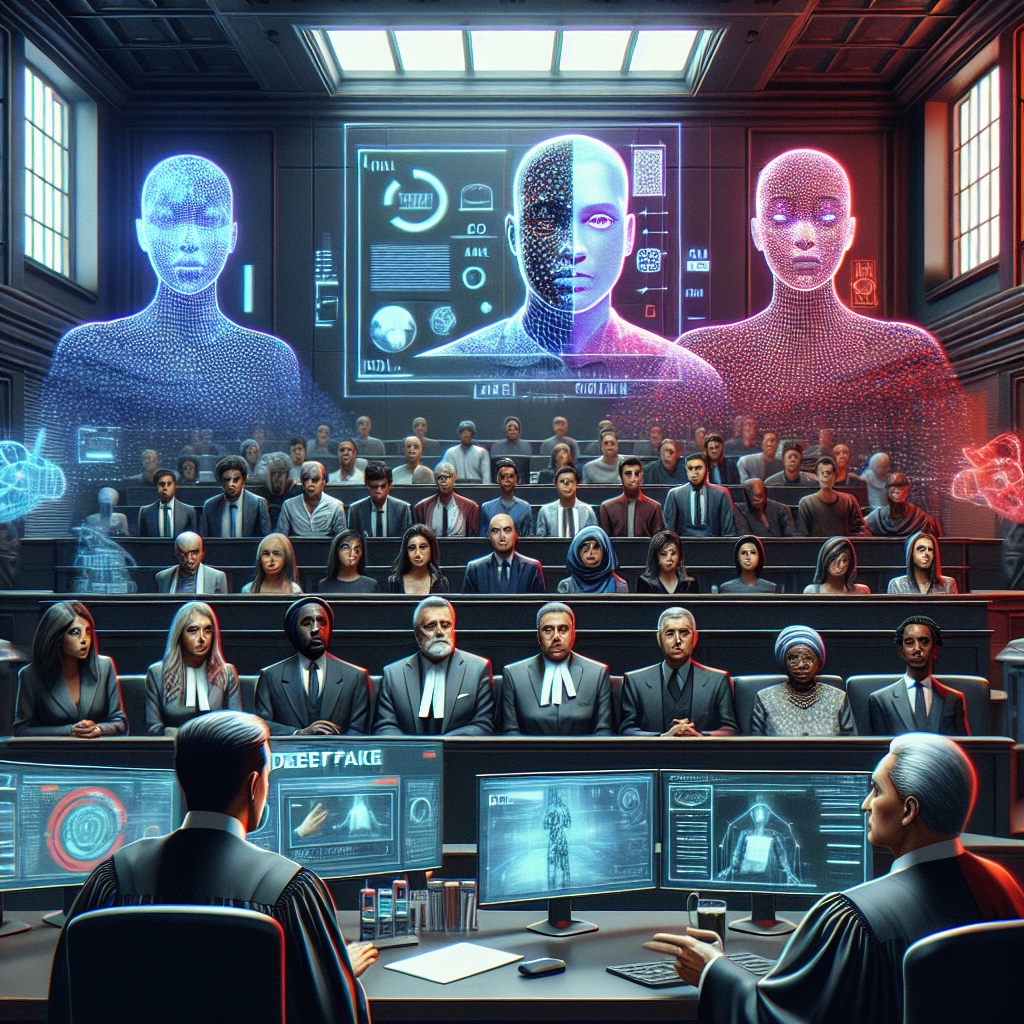

3. Potential for Malicious Use

AI in the wrong hands can be dangerous. Cybercriminals could use AI to launch more advanced cyberattacks, create deepfake videos to spread misinformation, or even manipulate financial markets. The more autonomous AI becomes, the greater the risk it could be used for harmful purposes.

4. Bias and Discrimination

AI relies on data to make decisions, but if the data is flawed or biased, so is the AI. For instance, AI used in hiring or lending decisions has been shown to reflect human biases, sometimes discriminating against certain groups. Without strict regulation, these biases can persist and even worsen over time.

Real-World Examples of AI Failures

To better understand the risks, let’s look at some real-world examples where AI went wrong:

- Self-Driving Car Accidents: Autonomous vehicles, while improving, have been involved in fatal crashes because they failed to detect pedestrians or react appropriately to changing conditions.

- AI-Generated Misinformation: Deepfake videos and AI-generated content have been used to spread false narratives, influencing public opinion and even election outcomes.

- Stock Market Flash Crashes: AI-powered trading algorithms have triggered sudden market crashes, sometimes wiping out billions of dollars in a matter of minutes.

These cases highlight why AI safety isn’t just about technology—it’s about ensuring that AI is developed and used responsibly.

How Can We Prevent AI from Becoming a Threat?

Thankfully, there are steps we can take to manage AI risks while still benefiting from its capabilities:

1. Implement Stronger Regulations

Governments and tech companies need to work together to develop AI regulations that ensure safety without stifling innovation. This might include rules requiring AI systems to undergo testing and approval before being widely deployed.

2. Increase Transparency

AI systems should be more transparent, allowing humans to understand and intervene if necessary. If a decision-making AI system is too complex to be explained, it should not be trusted with critical tasks like healthcare or law enforcement.

3. Human Oversight Is Essential

Instead of fully autonomous AI, a better approach is to use AI as a support tool, with humans always having the final say in important decisions.

4. Continuous Testing and Monitoring

AI should be regularly tested for risks and biases. Companies developing AI should have dedicated teams that evaluate how their systems perform in real-world scenarios, ensuring they align with ethical and safety standards.

Final Thoughts

AI is one of the most powerful tools ever created, and its potential to improve lives is enormous. But with great power comes great responsibility. If left uncontrolled, AI could lead to unintended consequences, from biased decision-making to security risks and even financial disasters.

The solution is not to reject AI, but to manage it wisely. By implementing regulations, maintaining transparency, and ensuring human oversight, we can harness AI’s benefits while keeping risks under control.

How do you feel about the rise of AI agents? Do you think regulations should be stricter? Share your thoughts in the comments below!